The Rise of Multimodal AI: Integrating Multiple Data Sources for Enhanced Performance

Introduction

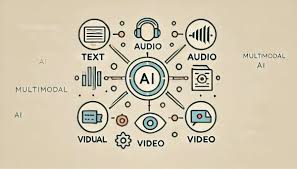

Artificial intelligence (AI) has advanced significantly in recent years, evolving from simple rule-based systems to complex machine learning models capable of analyzing vast amounts of data. One of the most exciting developments in AI is multimodal AI, a technology that integrates various data sources—such as text, images, audio, and video—to improve decision-making. By mimicking how humans process information from different sensory inputs, it enhances accuracy, efficiency, and adaptability in various applications.

What is Multimodal AI?

Multimodal AI refers to artificial intelligence systems that can process and interpret multiple types of data simultaneously. Traditional AI models often rely on a single data modality, such as text-based chatbots or image recognition algorithms. However, by combining different data streams, AI can achieve a more comprehensive understanding of information.

For instance, a voice assistant like Amazon Alexa or Google Assistant integrates voice commands with on-screen responses, enhancing user interaction. This ability to merge multiple data types makes AI systems more intuitive and effective.

How Multimodal AI Works

This AI operates through fusion techniques, where various types of data are combined to form a unified representation. The three main approaches include:

- Early Fusion – Raw data from different modalities is merged before being processed by an AI model.

- Late Fusion – Each data type is processed separately, and their outputs are combined later.

- Hybrid Fusion – A mix of early and late fusion, where some data types are merged at the input stage while others are combined at a later phase.

By employing these methods, AI can correlate information from multiple sources for more accurate predictions. For example, in autonomous vehicles, cameras, LiDAR sensors, and GPS work together to enhance navigation and safety.

Applications of Multimodal AI

This advanced AI technology is revolutionizing several industries by improving how machines interpret and interact with data. Below are some key applications:

1. Healthcare

Multimodal AI is transforming healthcare by combining medical imaging, electronic health records, and patient history to improve diagnostics and treatment recommendations. For example:

- IBM Watson Health analyzes X-rays, MRI scans, and patient data to assist doctors in diagnosing diseases like cancer.

- AI-powered chatbots in telemedicine integrate voice recognition and facial expressions to assess patient symptoms more accurately.

2. Autonomous Vehicles

Self-driving cars depend on AI to ensure safe navigation. These vehicles integrate data from multiple sources, such as:

- -> Cameras for object detection

- -> LiDAR sensors for depth perception

- -> GPS for location tracking

- -> LiDAR sensors for depth perception

Companies like Tesla and Waymo use AI-driven systems to interpret real-time traffic conditions and make split-second driving decisions.

3. Retail and E-Commerce

In the retail industry, AI enhances customer experiences through personalized recommendations and improved search capabilities. Examples include:

- Amazon’s visual search feature, which allows customers to take a picture of a product and receive relevant suggestions.

- Chatbots with voice and text capabilities, enabling seamless customer support interactions.

4. Entertainment and Media

Streaming services and content platforms utilize AI to improve user engagement. Examples include:

- Netflix and YouTube, which analyze viewing history and search queries to recommend personalized content.

- Deepfake technology, which combines video and audio processing to create realistic digital representations of people.

5. Security and Surveillance

AI is enhancing security through facial recognition, speech analysis, and behavioral monitoring. For instance:

- AI-powered CCTV systems analyze video footage and audio signals to detect suspicious activities.

- Border security applications use biometric verification such as fingerprints and facial scans.

Challenges in Implementing Multimodal AI

While this technology offers immense potential, it also presents several challenges:

1. Data Integration Complexity

Combining various types of data requires sophisticated algorithms to ensure compatibility and consistency. Misalignment between data sources can lead to inaccurate predictions.

2. Computational Costs

Processing multiple data streams demands significant computing power, increasing infrastructure costs and energy consumption.

3. Bias and Ethical Concerns

AI models can inherit biases from training data, leading to unfair or inaccurate results. Ensuring fairness requires careful dataset selection and continuous monitoring.

Future Trends in Multimodal AI

As AI continues to evolve, several trends are shaping its future:

- Advancements in Deep Learning – Improved neural networks will enhance AI’s ability to understand complex data relationships.

- Human-AI Collaboration – More intuitive systems will interact seamlessly with humans, improving user experiences in fields like education and healthcare.

- Real-time Multimodal Processing – AI will become more efficient at handling multiple data types in real time, benefiting self-driving cars and virtual assistants.

- Enhanced Natural Language Understanding (NLU) – AI will better interpret and respond to multimodal inputs in a more human-like manner.

Conclusion

Multimodal AI represents a significant leap forward in artificial intelligence, enabling systems to process and integrate multiple data sources for enhanced performance. From healthcare to autonomous vehicles, this technology is transforming industries by improving accuracy, efficiency, and user interactions. However, challenges like data integration and ethical concerns must be addressed to unlock its full potential. As research progresses, this AI-driven approach will continue shaping the future of intelligent computing, making machines more perceptive, adaptive, and useful in our everyday lives.

Explore the latest in AI technology at HERE AND NOW AI

Contact us now!